From the Lab

Blogs

SLIC Part 1 - Random Colours

Bernard Llanos — July 11, 2013 - 12:09pm

Introduction

Simple Linear Iterative Clustering is a recently-developed algorithm for segmenting images into regions of similar pixels.

Spacing Control for Coordinated Particle System (1)

Chujia Wei — July 9, 2013 - 3:37pm

If we have an attribute of spacing value for each pixle in a map, and particles will have to follow the spacing value to adjust their distance with neighbors, we then can control the spacing of particles based on an image. And if the spacing value is associated with the gradient value, the particles will be able to shape object in the image by changing their spacing.

As shown in the image below, there are two parts of spacing area on the canvas. When particles enter an area with a smaller spacing, the less chances they would kill adjacent neighbors and more possibilities to generate a new one.

The codes of space control (AB is the distance between particle A and B; Td is the threshold of current pixel; and V is a spacing viable for the current pixel):

average_Td = A_Td + B_Td; // get the average threshold of pixels that A and B currently in

average_V = A_V + B_V; // get the average viable

BTH_offset = 1.41;

DTH_offset = 0.58;

if (AB<DTH_offset * average_Td * average_V)

merge A and B as one particle;

else if (AB>BTH_offset * average_Td * average_V )

create a new particle between A and B;

//NOTE*: All the pixels on the left half of the canvas is set as: V = 1; and the right half is: V = 0.8. The threshold Td is set as 10 through out the canvas.

Cross-Filtering on Randomly-Coloured Grids

Bernard Llanos — July 9, 2013 - 11:23am

A simple experiment wherein the first image below was used to generate filtering masks, but the colour data used to determine new pixel values was obtained from images similar to the second image below:

A Closer Look at Mask Shapes and Heap Sizes

Bernard Llanos — July 8, 2013 - 3:05pm

Background

Parallel computation is a possible way to increase the speed of the cumulative range geodesic filter. However, calculating the filtered value of many pixels simultaneously means that memory needs to be allocated for the working data of multiple pixels at once, potentially increasing memory usage significantly.

In order to find an upper bound on the memory needed to filter a single pixel, it is necessary to examine the number of neighbouring pixels involved in the filtering process.

Filtering Using a Plane

Bernard Llanos — July 3, 2013 - 12:18pm

Inspiration and Background

Prasun Choudhury and Jack Tumblin used a first-order approximation to the colour of a source pixel when determining the degree of similarity between the source pixel and its neighbours. Their results are described in the following article: The Trilateral Filter for High Contrast Images and Meshes, Eurographics Symposium on Rendering 2003, pages. 1-11 (edited by Per Christensen and Daniel Cohen-Or).

The overall idea is to describe the neighbourhood of the source pixel with a plane respecting the average colour gradient in the area. We hope this will allow for smoothing which follows gradients in the image more closely than if we simply used averages of pixel values to describe regions.

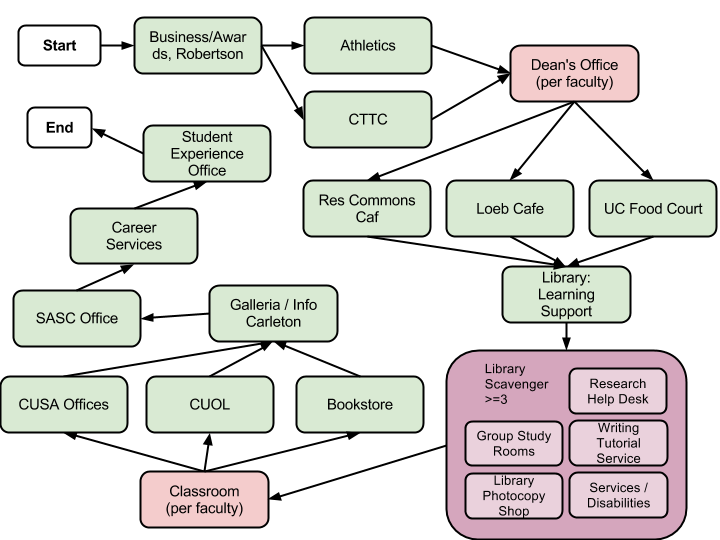

Carleton Quest 2.0: New Location Map

Gail Carmichael — July 2, 2013 - 5:59pm

I've been working on mapping out a new set of locations to visit in our QR code adventure app, Carleton Quest, which we want to have ready for this fall's frosh week. A big requirement was to reduce the amount of time it would take to visit all the locations. This meant fewer locations, or at least more locations that are very close together. This is the map we came up with.

Road Map for "Coherent Emergent Stories"

Gail Carmichael — June 27, 2013 - 12:02pm

This is a rough road map of the problems that need to be solved by our coherent emergent story system. You can learn a bit more about this project via the poster we presented at GRAND 2013.

-

Applying prerequisites and assigning scores to scenes using modifiers:

- What are the best modifiers to use in calculating a scene’s score?

- What is the best way to calculate the score (e.g. a simple formula)?

- How will gameplay (e.g., movement/location, combat (?), explicit choices through dialogue or menus) affect the values used in the calculations? (It should be strongly connected.)

- How can this approach direct players into a certain path according to their choices (for example, losing too much innocence might change what story paths are available for the rest of the game)?

-

Deciding when and how to present scenes to players:

- When should a scene be chosen from the top scoring scene(s) to be offered to the player? Alternatively, when should a scene be available anytime so long as its current score is above a threshold? (can use tags to facilitate?)

- When should players be given a choice about consuming a scene and when will a scene’s presentation be triggered automatically? (design questions, make sure to support with framework)

- When there is a choice, how can it be creatively presented to the player? How can we foreshadow the consequences of choices?

-

Modifying scenes dynamically:

- When and how should we dynamically change a scene to connect back to the story the player has seen so far? (Dialogue, objects, motifs, location)

- How should we modify a scene to fit in with current game state (e.g., location, character availability)?

-

Ambiguity + development of threads -- thematic, character arcs

- How can we encourage multiple interpretations of core story events?

- How can reoccurring motifs be introduced into scenes in a meaningful way? (e.g. through objects that can be in the environment, colours, etc...)

4-Neighbour vs. 8-Neighbour Graph Models of an Image

Bernard Llanos — June 26, 2013 - 12:11pm

Introduction

When building filtering masks around each pixel in an image, the filter needs to know which pixels are adjacent to a given pixel. Up until now, I have been using a 4 neighbour configuration, in which each pixel is adjacent to the pixels immediately to the left and right, immediately above, and immediately below.

Recently, I tried using an 8 neighbour configuration, in which each pixel is adjacent to the four closest pixels along diagonal offsets, in addition to the pixels above and below and to the left and right.

Basic Cumulative Range Geodesic Filtering Results

Bernard Llanos — June 26, 2013 - 11:46am

I uploaded a gallery of images to demonstrate the behavior of the cumulative range geodesic filter at various parameter values.

The gallery is available here.

This version of the filter is a replication of the prototype version created by Dr. Mould (see http://gigl.scs.carleton.ca/node/481).

From the images, it is evident that the masks created by the filter can be very irregular in shape. As the "gamma" parameter increases, the mask boundaries are pulled closer to the source pixel, resulting in more circular mask shapes and a softer output image.

Supplement: More on Filtering Speed vs. Aspect Ratio

Bernard Llanos — June 21, 2013 - 9:41am

In my previous post (http://gigl.scs.carleton.ca/node/495), the plots of filtering time vs. aspect ratio perhaps hide what is actually going on. The two plots below should make more sense.